Data Management, Governance & AI Tools

Disclaimer and Limitations of Liability

Nature of This Report

This report has been prepared and published for general informational purposes. All assessments, characterizations, and statements regarding the strengths and weaknesses of tools, platforms, and vendors represent the independent professional opinions of the authors, formed on the basis of publicly available information as of the research date shown on the cover. They are expressions of opinion and analytical judgement, not statements of verified fact or objective measurement. Nothing in this report should be construed as a definitive evaluation of any product or organization.

Fair Comment and Editorial Independence

This report is published as independent research commentary. No vendor, product company, investor, or other commercial party has sponsored, funded, commissioned, or otherwise influenced its contents. No vendor was paid for inclusion, and no vendor received preferential treatment in exchange for any consideration. The authors have no financial interest in any of the tools or vendors assessed herein. Assessments reflect the authors' honest opinion based on available evidence and are made in good faith without malicious intent.

Accuracy and Currency

The data management and governance tools market evolves rapidly. Product capabilities, pricing, deployment models, ownership structures, and competitive positioning described in this report reflect information available at the research date. The authors make no warranty, express or implied, that this information is accurate, complete, or current at the time of reading. Capabilities and market positions may have changed materially since publication. Readers should independently verify all information directly with vendors before making any procurement, investment, or strategic decisions.

Right to Correct

Vendors or organizations who believe a specific factual statement in this report is materially inaccurate are invited to submit corrections with supporting evidence. The authors will review and, where warranted, publish a correction. This process does not apply to assessments of opinion or analytical judgement, which remain the sole prerogative of the authors.

No Advisory Relationship

This report does not constitute procurement advice, investment advice, legal advice, or any other form of professional advisory service. No reader should rely on this report as the sole or primary basis for any decision. The authors and their organization accept no liability for any loss, damage, or adverse outcome (direct, indirect, or consequential) arising from reliance on any content in this report.

Permitted Use

This report may be shared, cited, and quoted freely for non-commercial purposes provided the source is attributed and no content is altered or presented out of context. Use of excerpts in commercial procurement processes, vendor evaluations, or investor materials is permitted provided the full disclaimer is included or prominently referenced. The report may not be republished in full or in substantially modified form without prior written consent.

Trademarks

All product names, company names, logos, and trademarks referenced in this report are the property of their respective owners. Their use is solely for identification and commentary purposes and does not imply affiliation with or endorsement by those owners.

Executive Summary

The modern data ecosystem has expanded dramatically over the past decade, shifting from monolithic data warehouse architectures toward highly distributed, cloud-native platforms augmented by AI at every layer. Organizations face a complex matrix of tooling choices spanning data acquisition, movement, transformation, governance, quality, analytics, and intelligence.

This Element22 Research Report provides a structured analysis of 33 tool categories making up the contemporary data management and governance landscape. For each category the leading commercial and open-source products are identified, capabilities are assessed against modern requirements, and architectural considerations relevant to enterprise data strategy are highlighted.

Key Findings

The market is consolidating around a small number of cloud data platforms — principally Snowflake, Databricks, Google BigQuery, and Microsoft Fabric — each expanding horizontally to absorb adjacent tool categories. This consolidation is driven both by vendors seeking larger addressable markets and by enterprise clients wanting fewer integration points and simpler licensing structures.

Data governance, quality, and observability have matured from optional add-ons into first-class architectural requirements, pushed by regulatory pressure (GDPR, CCPA, HIPAA, DORA, EU AI Act) and the practical need for trustworthy AI training data.

Open table formats (Apache Iceberg, Delta Lake, Apache Hudi) are reshaping analytics storage, enabling multi-engine interoperability and dissolving the hard boundary between data lakes and data warehouses.

Unstructured data, accounting for 80–90% of enterprise data by volume, is finally receiving proper tooling attention. Document intelligence, content governance, and unstructured data cataloging have moved from niche requirements to mainstream priorities, particularly as organizations use LLMs to extract value from documents, emails, contracts, and media.

AI-native capabilities are embedded across every category. Auto-profiling, natural-language querying, intelligent pipeline generation, and anomaly detection are now expected features, not differentiators.

Agentic AI systems capable of autonomous multi-step data work are beginning to collapse traditional tool category boundaries, most notably in data preparation, discovery, lineage tracking, and orchestration.

The paper concludes with a forward-looking assessment of how large language models, foundation models, and agentic AI systems will reshape the data tooling landscape through 2030 and beyond, including the critical transition to real-time data architectures.

1. Introduction

1.1 The Evolving Data Landscape

Data has become the central strategic asset of the modern enterprise. Volume, velocity, and variety have grown exponentially, fueled by AI, cloud computing, IoT proliferation, digital commerce, and the ubiquity of SaaS applications. Regulatory requirements have simultaneously elevated data governance from a back-office discipline to a board-level priority.

The tooling ecosystem has gone through several waves of transformation. The first generation was dominated by on-premises relational databases and ETL tools from IBM, Oracle, Informatica, and SAP. The second brought the cloud data warehouse as an analytical hub, with Teradata, Netezza, and Amazon Redshift establishing columnar cloud storage. It was Snowflake, launched in 2012, that effectively closed this era rather than simply belonging to it. By fully separating storage from compute and delivering the warehouse as an elastic managed service, Snowflake made the architectural assumptions of the second generation look dated while most incumbents were still defending them. The third and current generation is defined by three concurrent forces: cloud-native managed services, the decentralization of data ownership through patterns like Data Mesh, and the rapid integration of artificial intelligence into every tier of the data stack. Snowflake has since expanded well beyond the warehouse into ingestion, transformation, governance, and AI, while its closest rival Databricks has converged from the opposite direction, moving from AI and machine learning toward governed analytics. The competition between the two, each trying to own the full data-to-AI lifecycle, is one of the defining dynamics of the current generation.

Two developments since 2023 have accelerated this evolution in ways that deserve particular attention.

First, AI is no longer an adjacent capability; it is becoming part of the data platform itself. Snowflake embeds Cortex AI directly in the warehouse. Databricks ships model training and inference alongside data engineering. BigQuery integrates Gemini for natural language querying and automated pipeline generation. Power BI Copilot turns dashboard creation into a conversation. Organizations evaluating tooling today need to assess AI readiness as a first-order criterion, not a bonus.

Second, the boundary between tool categories is dissolving. Vendors originally built for a single use case are systematically expanding into adjacent spaces. Collibra started as a governance tool and now competes in data catalog, lineage, quality, unstructured data governance and marketplace. Databricks started as a Spark runtime and now offers data lakehouse, catalog, governance, BI, and model deployment. The result is a market moving toward platform consolidation, where a smaller number of vendors cover a broader surface area. This creates both opportunity and risk for buyers: fewer tools and tighter integration on one side, deeper lock-in and feature-depth trade-offs on the other.

Unstructured data deserves specific mention as an area historically underserved by data management tooling built for structured tabular data. Documents, emails, contracts, call recordings, images, video, and social content collectively represent 80–90% of enterprise data by volume, yet most data governance, quality, and catalog tooling was built for relational tables. That gap is closing rapidly. Microsoft Purview now governs SharePoint, Exchange, and Teams content alongside SQL databases. BigID catalogs unstructured files alongside structured tables. Tools like AWS Textract, Google Document AI, Data Dynamics' Zubin and ABBYY Vantage extract structured information from documents at scale.

1.2 Reference Architecture

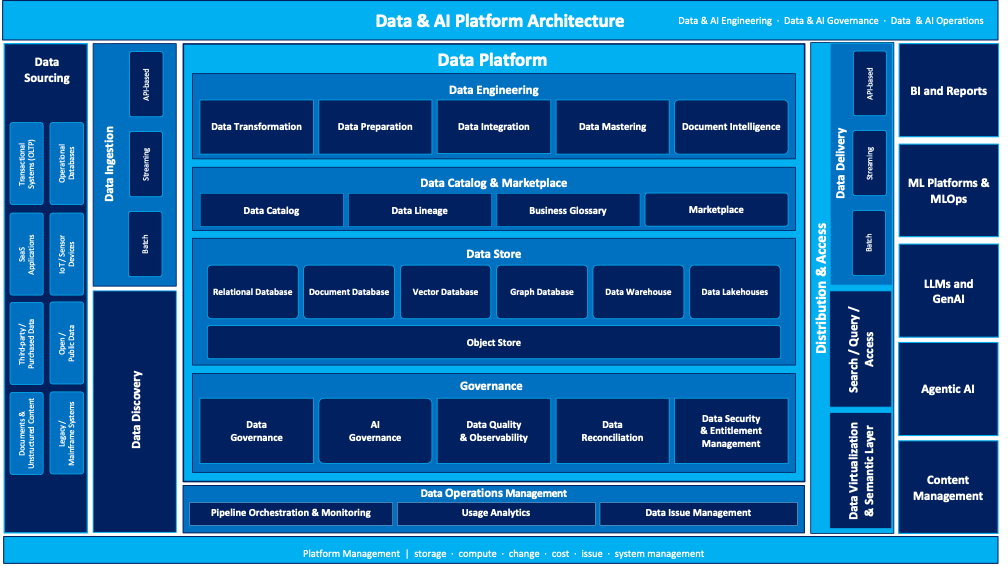

The diagram below illustrates the reference architecture for a modern enterprise data platform, showing how the major capability layers interact, from data sourcing and ingestion through engineering, governance, storage, and distribution to end consumers.

Figure 1 — Enterprise Data Platform Reference Architecture (Element22)

1.3 Purpose and Scope

This paper serves as a reference guide for data architects, Chief Data Officers, enterprise architects, and technology strategists. The scope covers 36 primary tool categories spanning the full data value chain from sourcing to intelligence, covering both commercial and open-source products with particular attention to cloud-native and multi-cloud deployments.

1.4 Research Methodology

Assessments draw on vendor documentation, analyst research (Gartner Magic Quadrant, Forrester Wave, IDC MarketScape), community adoption metrics (GitHub stars, Stack Overflow activity, CNCF landscape data), and practitioner feedback from the broader data engineering community as of Q1 2026. Tool capabilities are rated qualitatively across dimensions relevant to each category. Where a tool spans multiple categories, it is assessed in its primary category and referenced in others.

2. Tool Categories and Market Analysis

2.1 Data Sourcing

| Tool / Platform | Vendor | Deployment | Source Coverage | OSS | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| Fivetran | Fivetran | SaaS / Cloud | 300+ pre-built connectors, fully managed CDC, automatic schema migration, dbt integration | No | 300+ connectors; gold standard for reliability; auto schema migration; dbt native | Pricing can be significant at scale; limited customization without custom connectors |

| Airbyte | Airbyte (OSS) | OSS / Cloud / Self-hosted | 400+ connectors (community + certified), connector dev kit (CDK), custom connectors | Yes | Largest open-source connector library; cost-effective; CDK allows rapid custom connectors | Community connectors vary in quality; managed cloud adds cost; less polished than Fivetran for enterprise |

| Stitch (Talend) | Talend / Qlik | SaaS | 100+ Singer-based connectors, simple SaaS, incremental replication | No | Simple and accessible; good for mid-market; Singer standard reduces lock-in | Roadmap uncertainty post-Qlik acquisition; limited connector depth; fewer features than Fivetran |

| Meltano | Meltano (OSS) | OSS / Self-hosted | Singer-compatible, GitOps-friendly, dbt and Airflow integration, CLI-first | Yes | GitOps-native; excellent code-first DX; integrates with dbt naturally | Self-managed; community support only; less suitable for non-technical teams |

| Hevo Data | Hevo Data | SaaS | 150+ sources, real-time streaming ingestion, built-in transforms, no-code | No | Good value; real-time ingestion; strong for Asia-Pacific market | Enterprise features still maturing; smaller connector library than Fivetran |

| Debezium | Red Hat (OSS) | OSS / Kafka | Log-based CDC for MySQL, Postgres, MongoDB, Oracle, SQL Server; Kafka Connector | Yes | Industry-standard open CDC; highly reliable; log-based means zero performance impact on source | Requires Kafka operational expertise; limited to CDC use case; no UI |

| Qlik Replicate (Attunity) | Qlik | On-prem / Cloud | CDC-focused, 40+ sources, real-time log-based replication, bidirectional | No | Mature CDC platform; strong enterprise pedigree; heterogeneous target support | Premium pricing; UI dated; requires specialist expertise |

| AWS Glue Connectors | AWS | Cloud (AWS) | JDBC/ODBC, Marketplace connectors, serverless crawlers, Spark-based | No (managed) | Serverless; deep AWS integration; S3, Redshift, RDS crawlers built-in | Connector coverage narrower than Fivetran; requires Spark knowledge for custom logic |

| Azure Data Factory Linked Services | Microsoft | Cloud (Azure) | 100+ connectors, integration runtime for on-prem hybrid, data flow transforms | No (managed) | Native Azure ecosystem; hybrid on-prem support via IR; strong enterprise support | UI complexity grows; Azure-centric; limited compared to Fivetran for SaaS connectors |

| Google Cloud Datastream | Cloud (GCP) | CDC from Oracle, MySQL, PostgreSQL, Spanner to BigQuery/GCS; serverless | No (managed) | Serverless; low-latency CDC into BigQuery; minimal configuration for GCP pipelines | Source coverage limited; BigQuery-centric; not suitable for multi-cloud targets | |

| Snowflake (as source) | Snowflake | Cloud (SaaS) | Snowflake Data Sharing, Dynamic Tables, Change Tracking for CDC from Snowflake tables | No | Zero-copy sharing; near-real-time change tracking; no ETL needed for downstream consumers | Source only; requires target system Snowflake connector; ecosystem dependent |

| Databricks (as source) | Databricks | Cloud (SaaS) | Delta Sharing (open protocol), Delta Change Data Feed, Unity Catalog data product sharing | Delta Sharing: Yes | Open Delta Sharing protocol works with any consumer; CDC via Change Data Feed; Unity Catalog governance | Source only; Delta Sharing consumer ecosystem still maturing vs. Snowflake marketplace |

| Apify / Diffbot | Apify / Diffbot | SaaS | Web scraping, public web data extraction, AI-powered entity extraction | Apify: Yes | Apify open-source actors; Diffbot AI entity extraction is unique; good for public web data pipelines | Not enterprise data sources; legal and rate-limit considerations; Diffbot cost can escalate |

Fivetran leads on connector breadth and managed reliability but faces pressure from Airbyte's open-source model at scale. Debezium remains the standard for production log-based CDC and is now complemented by Flink CDC for streaming use cases. Snowflake and Databricks as data sources are increasingly important: as organizations build data mesh architectures, these platforms are themselves producers of curated data products consumed by downstream systems via Delta Sharing or Snowflake Data Sharing. Cloud platform-native connectors (AWS Glue, Azure Data Factory, Datastream) continue gaining ground for organizations already committed to a single cloud.

2.2 Data Ingestion and Data Delivery

2.2.1 Batch Ingestion

| Tool / Platform | Vendor | Deployment | Key Capabilities | OSS | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| Apache Spark (batch) | Apache (OSS) | On-prem / Cloud | Distributed in-memory processing, Python/Scala/SQL/R APIs, Delta Lake integration, structured streaming | Yes | De facto standard for large-scale batch; rich ecosystem; Databricks removes ops overhead | High ops complexity without managed service; steep learning curve for custom connectors |

| AWS Glue (ETL) | AWS | Cloud (AWS) | Serverless Spark, visual Glue Studio, Glue Data Catalog integration, auto-scaling, Glue DQ | No (managed) | Serverless Spark; tight S3/Redshift/Athena integration; Glue DQ adds quality checks | Cost can escalate; Spark expertise still required for complex logic; AWS-only |

| Azure Data Factory | Microsoft | Cloud (Azure) | 100+ connectors, code-free data flows, integration runtime for on-prem, Fabric integration | No (managed) | Mature enterprise integration; hybrid on-prem support; strong governance via Purview | UI complexity grows; Spark-based data flows can be slow; largely AWS/GCP-ignored |

| Google Cloud Dataflow | Cloud (GCP) | Managed Apache Beam, unified batch/stream, autoscaling, BigQuery native integration | No (managed) | Serverless auto-scaling; BigQuery native; Beam portability across runtimes | Beam SDK adds abstraction overhead; debugging complex; GCP-centric | |

| Matillion ETL/ELT | Matillion | Cloud (SaaS) | Cloud DW-native ELT for Snowflake/BigQuery/Redshift/Databricks, visual builder, AI-assisted mapping | No | Visual pipeline builder; push-down execution uses DW compute efficiently; AI mapping | DW-centric; not suited to complex non-SQL transforms; per-connector licensing |

| Informatica IDMC | Informatica | Cloud / On-prem | Enterprise ETL/ELT, AI-powered mapping (CLAIRE), pushdown optimization, 500+ connectors | No | Broadest enterprise ETL; CLAIRE AI mapping saves time; strong hybrid support | Premium pricing; complex licensing; CLAIRE still requires human validation |

| IBM DataStage | IBM | On-prem / Cloud | Parallel processing ETL, deep IBM ecosystem, DataStage Next for cloud-native workloads | No | Mature parallel processing; strong in regulated industries; IBM Cloud modernization | Legacy architecture; slower cloud modernization vs. competitors; IBM lock-in risk |

| Talend Data Integration | Talend / Qlik | OSS / Cloud | GUI-based ETL, Java/Spark execution, 900+ components, DQ and governance integration | Yes (OSS Studio) | Large open-source community; extensive component library; DQ integration built-in | Qlik acquisition roadmap uncertainty; Java-heavy; licensing complexity growing |

| Snowflake (native ingestion) | Snowflake | Cloud (SaaS) | COPY INTO, Snowpipe (continuous), Dynamic Tables (declarative materialization), Streams | No | Near-zero latency with Snowpipe; Dynamic Tables replace complex ETL for many patterns; no extra cost | Snowflake-only; not suitable for multi-target ingestion; limited transformation logic vs. Spark |

| Databricks Auto Loader | Databricks | Cloud (SaaS) | Incremental file ingestion from cloud storage, schema inference, schema evolution, DLT integration | No | Seamless lakehouse ingestion; schema evolution built-in; tight Unity Catalog integration | Databricks-only; requires Delta Lake format; not suited for real-time streaming beyond micro-batch |

| Fivetran (ELT) | Fivetran | SaaS / Cloud | Managed ELT pipelines, 300+ source connectors, dbt integration for post-load transformation | No | Fully managed; reliable; excellent for SaaS-to-warehouse patterns; dbt native | Not a transformation engine; pricing at scale; connector-level billing model |

| dlt (data load tool) | dltHub (OSS) | OSS / Python | Python library for declarative pipelines, schema inference, incremental loading, Rust core | Yes | Lightweight; pure Python; great developer experience; fast growing community | Early stage; limited connector library vs. Fivetran; no managed service yet |

2.2.2 Streaming Ingestion

| Tool / Platform | Vendor | Deployment | Key Capabilities | OSS | Throughput / Latency | Operational Complexity |

|---|---|---|---|---|---|---|

| Apache Kafka | Apache / Confluent | OSS / Cloud | Distributed commit log, pub-sub, Kafka Connect ecosystem, Kafka Streams, 700+ connectors | Yes | Millions of msgs/sec; sub-10ms latency; massive ecosystem; battle-tested at hyperscale | Operational complexity (ZooKeeper historically); rebalancing events; requires Kafka expertise to tune |

| Confluent Platform / Cloud | Confluent | Cloud / On-prem | Managed Kafka, Schema Registry, ksqlDB, Connectors, RBAC, FLINK integration, audit logs | Partial (OSS Kafka) | Reduces Kafka ops dramatically; Schema Registry prevents breaking changes; enterprise RBAC | Premium pricing; vendor lock-in risk beyond OSS Kafka; BYOC model needed for regulated industries |

| Apache Flink | Apache (OSS) | On-prem / Cloud | Stateful stream processing, event time semantics, Flink SQL, Flink CDC (source replacement) | Yes | Best stateful streaming; event-time correctness; Flink CDC excellent for DB-to-stream | Operational complexity; JVM tuning; state backend management; steep learning curve |

| AWS Kinesis | AWS | Cloud (AWS) | Data Streams, Firehose (delivery to S3/Redshift), Analytics (Flink-based); fully managed | No | Fully managed; pay-per-use; Firehose zero-ETL to S3/Redshift; Amazon Q integration | AWS-only; Shard management complexity; limited to 7-day retention; harder to tune vs. Kafka |

| Azure Event Hubs | Microsoft | Cloud (Azure) | Kafka-compatible, Stream Analytics (SQL-based), Capture to ADLS, Fabric Real-Time Intelligence | No | Kafka wire compatibility; Fabric RTI makes streaming first-class; minimal migration from Kafka | Kafka compatibility partial; Stream Analytics SQL is limited vs. Flink; Azure-only |

| Google Pub/Sub + Dataflow | Cloud (GCP) | Pub/Sub messaging plus Dataflow (Beam) for stream processing; BigQuery direct streaming | No | Globally distributed; auto-scales to zero; Dataflow exactly-once into BigQuery | Beam SDK complexity; GCP-centric; Pub/Sub ordering guarantees limited vs. Kafka partitions | |

| Apache Pulsar | Apache (OSS) | OSS / StreamNative Cloud | Multi-tenancy, tiered storage, geo-replication, Kafka compatibility layer, functions | Yes | Native tiered storage; strong multi-tenancy; Kafka wire compatible; geo-replication built-in | Smaller ecosystem than Kafka; tooling maturity behind; StreamNative adds cost |

| Redpanda | Redpanda | OSS / Cloud | Kafka-compatible C++ core, no ZooKeeper, very low latency, simple operations, WarpSpeed | Yes | Best p99 latency; 10x fewer nodes than Kafka for same throughput; operational simplicity | Smaller ecosystem than Kafka; enterprise features still maturing; not Kafka 100% feature parity |

| Snowflake Dynamic Tables | Snowflake | Cloud (SaaS) | Declarative streaming/micro-batch materialization, change propagation, freshness targets, DML-based CDC | No | Zero operational overhead; SQL-only; replaces many streaming ETL patterns inside Snowflake | Latency higher than true streaming (minutes); Snowflake-only; SQL transforms only |

| Databricks Structured Streaming | Databricks | Cloud (SaaS) | Spark Structured Streaming, DLT continuous mode, Delta Live Tables, Kafka/Kinesis/Pub-Sub connectors | Spark: Yes | Unified batch/stream in one framework; DLT adds quality and monitoring; excellent Delta Lake integration | Databricks-only for managed; micro-batch model (not true event-driven); higher latency than Flink |

| Google BigQuery Streaming (Storage Write API) | Cloud (GCP) | Native streaming inserts to BigQuery, Storage Write API (committed/buffered/pending modes), exactly-once | No | Sub-second data freshness in BigQuery; exactly-once semantics; no separate streaming infrastructure | BigQuery-only; no intermediate stream processing; requires separate stream processor for transforms |

2.2.3 API-Based Ingestion

| Tool / Platform | Vendor | Deployment | Key Capabilities | OSS | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| MuleSoft Anypoint Platform | Salesforce | Cloud / On-prem | API-led connectivity, 500+ connectors, DataWeave transforms, API management, MQ messaging | No | Most comprehensive iPaaS; API management included; Einstein AI mapping assistance; huge connector library | Premium pricing; complex licensing; steep learning curve; heavy for simple use cases |

| Dell Boomi | Boomi | Cloud (SaaS) | iPaaS, 1600+ connectors, MDM, API Management, Flow workflow engine, Boomi AI mapping | No | Largest connector count; Boomi AI reduces mapping time significantly; strong mid-enterprise fit | Less deep API management vs. MuleSoft; some connectors are thin wrappers; cloud-only |

| Workato | Workato | Cloud (SaaS) | Enterprise automation and integration, 1000+ connectors, recipe-based, AI Copilot, API platform | No | Business user accessible; fastest time-to-value for SaaS integration; AI Copilot helpful | Less suited for complex data engineering; limited transformation depth vs. MuleSoft |

| AWS API Gateway + Lambda | AWS | Cloud (AWS) | Custom API ingestion, serverless, event-driven, Step Functions orchestration, EventBridge | No | Infinitely flexible; pay-per-use serverless; tight AWS data service integration | Requires custom code; no pre-built connectors; dev and ops overhead |

| Azure API Management + Logic Apps | Microsoft | Cloud (Azure) | API gateway with policies, Logic Apps for workflow automation, 400+ connectors, Fabric event-driven triggers | No | Deep Azure ecosystem; Logic Apps no-code connectors; APIM handles authentication, throttling, transformation | Logic Apps JSON config verbose; APIM learning curve; Azure-centric; Logic Apps pricing complexity |

| Azure Event Grid + Functions | Microsoft | Cloud (Azure) | Event-driven ingestion, 25+ event sources, serverless Functions, push-based delivery to 20+ handlers | No | Native Azure event routing; near-real-time push delivery; deeply integrated with Azure Data Factory and Fabric | Azure-only; limited transformation; event schema management required externally |

| Apigee (Google) | Cloud (GCP) | Full API management, analytics, developer portal, hybrid gateway, Advanced API Security | No | Best API analytics in market; hybrid deployment; strong monetization and developer portal | Heavy for simple use cases; GCP-centric; pricing per API call can escalate | |

| Celigo | Celigo | Cloud (SaaS) | iPaaS for SaaS integration, pre-built integration apps, FlowBuilder, ERP and CRM connectors, AI mapping | No | Pre-built ERP/CRM integration apps save weeks; AI field mapping; strong NetSuite specialization | Narrower than Boomi/MuleSoft; less suitable for complex data pipelines; SaaS integration focus |

Modern ingestion architectures favor Lambda or Kappa patterns, handling batch and streaming through a common metadata layer. The shift to cloud-native, push-down ELT using the warehouse's own compute has disrupted traditional ETL vendors. Apache Kafka remains the dominant streaming backbone, with Confluent leading the managed space, while Redpanda challenges with C++ performance and operational simplicity.

The significant 2025–2026 development is the native streaming ingestion capabilities from Snowflake (Snowpipe, Dynamic Tables), Databricks (Auto Loader, Structured Streaming), and Google (BigQuery Storage Write API): for teams already on these platforms, separate ingestion tooling is increasingly optional. Enterprise requirements consistently include exactly-once semantics, schema evolution support, end-to-end lineage, and native governance integration.

Data delivery leverages the same connectors, messaging platforms, and streaming infrastructure as ingestion. Snowflake Data Marketplace and Databricks Marketplace extend this to commercial and cross-organization data product distribution, enabling zero-copy data delivery at scale without physical data movement.

2.3 Data Discovery

| Tool / Platform | Vendor | Deployment | Key Capabilities | OSS | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| Alation Data Intelligence | Alation | Cloud / On-prem | AI-powered search, behavioral analytics, stewardship workflows, SQL editor, query history mining, file system asset coverage | No | Pioneer in ML-powered discovery; strong behavioral analytics surface curation priorities automatically; extending to files and documents | Primarily structured data strength; unstructured coverage still maturing; complex implementation for large estates |

| Atlan | Atlan | Cloud (SaaS) | Collaboration-focused discovery, 50+ integrations, lineage, policies, AI search, embedded glossary, Slack/Teams integration | No | Modern developer-friendly UX; fast-growing; strong OpenMetadata standards support; excellent API extensibility | Newer vendor; enterprise breadth still maturing compared to Collibra and Alation; primarily structured data focus |

| Collibra Data Intelligence Cloud | Collibra | Cloud / On-prem | Enterprise catalog, business glossary, lineage, governance workflows, data marketplace, document assets via Collibra DeasyLabs | No | Market leader; comprehensive structured coverage; document and unstructured data discovery through DeasyLabs integration | High implementation cost and complexity; requires significant ongoing stewardship effort; premium pricing |

| Collibra DeasyLabs | Collibra | Cloud (SaaS) | Unstructured data discovery and classification, AI-powered document metadata extraction, SharePoint/S3/NAS scanning, sensitive data identification in documents | No | Purpose-built for unstructured discovery within Collibra ecosystem; AI-driven metadata extraction; strong compliance use cases | Collibra ecosystem dependency; newer product still building enterprise references; primarily document and file focus |

| DataHub | LinkedIn / Acryl Data | OSS / Cloud (Acryl) | Metadata platform, push/pull ingestion, lineage, search, column-level lineage, custom entities for any asset type | Yes (Apache 2.0) | Leading OSS metadata platform; 9k+ GitHub stars; highly extensible; custom entity model supports unstructured assets | Requires engineering resource to operate OSS version; UI less polished than commercial tools; professional services needed at scale |

| Microsoft Purview | Microsoft | Cloud (Azure) | Automated scanning of Azure SQL, Blob, ADLS, SharePoint, Exchange, Teams, sensitivity labels, classification, M365 data map | No | Strongest unstructured data discovery in market; unique M365 coverage; good structured DW coverage growing rapidly | Azure/M365 ecosystem dependency; non-Microsoft source coverage less deep; governance workflows less mature than Collibra |

| Google Dataplex / Data Catalog | Cloud (GCP) | Unified data management, auto-discovery of GCS objects and BigQuery, tagging, data quality rules, lineage, GCS object coverage | No | Native GCP integration; GCS object discovery covers unstructured file assets; strong BigQuery lineage | GCP-centric; limited coverage outside Google Cloud; business metadata and governance capabilities less mature than specialist tools | |

| AWS Glue Data Catalog | AWS | Cloud (AWS) | Central metadata repository, crawler-based discovery of S3 and JDBC sources, Lake Formation integration, S3 object discovery | No | Foundational AWS data discovery; S3 crawlers discover unstructured file assets; tightly integrated with AWS analytics services | Limited business metadata and search quality; no governance workflow; primarily technical metadata focus |

| BigID | BigID | Cloud (SaaS) | Data discovery across 500+ structured and unstructured sources including S3, SharePoint, NAS, databases; PII identification, classification, data risk scoring | No | Leader for unstructured data discovery; finds sensitive data in files, emails, and cloud storage regardless of format; very broad source coverage | Primarily a security/privacy tool rather than analytics discovery; catalog and lineage features less mature than pure catalog vendors |

| Data Dynamics Zubin | Data Dynamics | Cloud / On-prem | Unstructured data discovery across NAS, S3, SharePoint, file servers; content classification, metadata extraction, compliance identification, storage tiering | No | Strong focus on unstructured data governance and discovery; storage cost optimisation alongside compliance; good for file-heavy organizations | Less known in the market than BigID or Purview; primarily unstructured focus; structured data capabilities limited |

| Ohalo | Ohalo | Cloud (SaaS) | AI-powered unstructured data discovery, semantic search over file stores, auto-classification, GDPR/CCPA compliance discovery across documents and emails | No | Purpose-built for unstructured data compliance; strong semantic AI search; identifies personal data in complex document layouts | Smaller vendor; primarily compliance-driven use case; less suitable as a general-purpose discovery platform |

| Clarista | Clarista | Cloud (SaaS) | AI-native data discovery and analytics, natural language search over business data, automatic insight generation, self-service exploration for non-technical users | No | Excellent natural language query experience; lowers barrier for non-technical discovery; rapid deployment; modern LLM-powered interface | Newer entrant; enterprise governance depth still maturing; best suited for analytics discovery rather than compliance or lineage use cases |

| Elasticsearch / OpenSearch | Elastic / AWS | Cloud / OSS | Full-text search over unstructured and semi-structured content, vector search, NLP-based content discovery, multi-tenant indices | Yes (OpenSearch) | Essential for free-text and semantic search over documents and logs; vector search capability is strong for RAG architectures | Not a metadata catalog; requires engineering to build governance layer; no lineage or stewardship workflow out of the box |

| Secoda | Secoda | Cloud (SaaS) | AI-native discovery, natural language search, automated documentation, Slack/Teams integration, LLM-powered metadata generation | No | Modern AI-first approach; LLM-powered search and documentation; good for teams wanting low-friction discovery with minimal curation overhead | Smaller vendor; enterprise governance breadth limited; primarily structured data; less suitable for complex unstructured estates |

Data discovery is converging with catalog functionality, and the sharpest competitive differentiator today is unstructured data coverage. Microsoft Purview is notably ahead in discovering and classifying M365 content alongside structured databases. BigID leads for breadth across heterogeneous file types. Data Dynamics Zubin and Ohalo serve organizations where the primary concern is governance of file server and cloud object store content rather than database metadata.

Clarista represents a new wave of AI-native discovery tools that prioritize the end-user experience over governance depth, making analytics discovery far more accessible to non-technical stakeholders. For enterprise programs, the most capable organizations combine a structured data catalog such as Alation or Atlan with an unstructured discovery tool, rather than expecting one platform to cover everything equally well. OpenMetadata and OpenLineage standards are reducing lock-in risk on the structured side as the ecosystem matures.

2.4 Data Platform

2.4.1 Data Engineering

Data engineering encompasses all tooling that transforms, prepares, integrates, and masters data within the platform. It covers the compute-intensive work of turning raw ingested data into analytical-ready and ML-ready datasets, as well as the specialized work of establishing master records for critical business entities. Document management is included here as the engineering discipline responsible for processing, classifying, and routing document content as a structured asset within the pipeline.

2.4.1.1 Data Transformation (Pipelines)

| Tool / Platform | Vendor | Deployment | Key Capabilities | OSS | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| dbt (data build tool) | Fivetran | OSS / dbt Cloud | SQL-based transformations, modular DAGs, testing, documentation, version control, Semantic Layer, column-level lineage | Yes | De facto ELT standard; 30k+ GitHub stars; version-controlled; column-level lineage from v1.6; semantic layer | SQL-only without add-ons; limited support for complex non-SQL logic; dbt Cloud adds cost |

| Apache Spark | Apache (OSS) | On-prem / Cloud | Distributed transforms, Python/Scala/SQL/R APIs, MLlib, structured streaming, Delta Lake | Yes | Essential for large-scale or complex transforms; supports ML pipelines; Databricks removes ops overhead | Steep learning curve; overkill for simple transforms; Java/Scala debugging complex |

| Snowflake (Snowpark) | Snowflake | Cloud (SaaS) | Python/Java/Scala transforms inside Snowflake, DataFrame API, stored procedures, Dynamic Tables, ML functions | No | Pushdown transforms in Snowflake compute; no data movement; supports Python pandas-like syntax | Snowflake-only; limited to Snowflake ecosystem; Python support newer and still maturing |

| Databricks Delta Live Tables | Databricks | Cloud (Databricks) | Declarative pipeline framework on Spark, DLT expectations, auto-scaling, Unity Catalog integration, Python/SQL | No | Asset-oriented transforms; quality expectations built-in; Unity Catalog integration; continuous and triggered modes | Databricks-only; opinionated framework; debugging more complex than standard notebooks |

| AWS Glue (ETL) | AWS | Cloud (AWS) | Serverless Spark, visual Glue Studio, Glue Data Catalog, Glue Data Quality, Python/Scala | No | Serverless; AWS-native; Glue DQ adds quality checks; visual authoring for non-engineers | Spark expertise required for complex transforms; cost can escalate; AWS-only ecosystem |

| Google Cloud Dataflow (Beam) | Cloud (GCP) | Unified batch/stream transforms via Apache Beam SDK, autoscaling, BigQuery direct write | No | True unified batch/stream; portable to Flink/Spark runners; BigQuery native; serverless | Beam abstraction adds complexity; debugging hard; GCP-centric; steeper learning curve | |

| Matillion ETL/ELT | Matillion | Cloud (SaaS) | Cloud DW-native ELT for Snowflake/BigQuery/Redshift/Databricks, visual builder, AI-assisted column mapping | No | Visual pipeline builder with SQL pushdown; AI mapping accelerates development; good governance hooks | DW-centric; Python components exist but feel bolted on; per-connector licensing |

| Coalesce | Coalesce | Cloud (SaaS) | SQL-first visual ELT for Snowflake, column-aware transforms, documentation, dbt export capability | No | Innovative visual-to-SQL; column-level lineage built-in; excellent Snowflake integration | Snowflake-only currently; growing but smaller community than dbt; newer platform |

| Informatica IDMC (transforms) | Informatica | Cloud / On-prem | Complex transforms, AI-assisted mapping (CLAIRE), pushdown optimisation, MDM integration, data quality | No | Enterprise-grade; CLAIRE AI mapping reduces effort; supports complex multi-source transforms | Premium pricing; complex licensing; CLAIRE still needs human oversight |

| Talend Open Studio | Talend / Qlik | OSS / Cloud | GUI ETL, Java/Spark execution, 900+ components, DQ and governance integration | Yes (Studio) | Open-source community edition; extensive component library; DQ integration baked in | Qlik acquisition uncertainty; Java execution environment heavy; OSS version falling behind |

| Trino / Starburst | Trino (OSS) / Starburst | On-prem / Cloud | Federated SQL query engine, push-down to heterogeneous sources, Iceberg/Hudi/Delta support, ANSI SQL | Yes (Trino) | Federated transforms across multiple stores without data movement; excellent Iceberg support | Not a transform orchestration tool; no pipeline scheduling; complex tuning for performance |

| Ab Initio | Ab Initio Software | On-prem / Cloud | Parallel batch transformation, graphical component-based development (GDE), high-volume data processing, complex joins and aggregations, Co>Operating System for job scheduling, metadata hub, data profiling | No | Unmatched throughput for very large batch workloads; proven at the largest financial institutions for core processing; highly reliable for mission-critical overnight batch; strong parallelism model handles complex multi-source transformations well | Proprietary and closed; pricing is opaque and significant; no cloud-native deployment model; requires specialist Ab Initio skills that are increasingly scarce; poor fit for modern ELT patterns and real-time pipelines; no community or open-source ecosystem |

The transformation landscape has bifurcated. For warehouse-centric analytics, dbt has become the community standard. For large-scale distributed processing, Apache Spark via Databricks, AWS EMR, or Google Dataproc remains the engine of choice. The platform-native transformation services from Snowflake (Snowpark), Databricks (DLT), and AWS (Glue) are increasingly good enough for teams already committed to those platforms, reducing the case for separate transformation tools.

Column-level lineage natively within transformation definitions (dbt 1.6+, DLT), semantic layer support for consistent metric definitions, and incremental/CDC-aware patterns for near-real-time analytics are the modern requirements most organizations still need to fully implement.

2.4.1.2 Data Preparation

| Tool / Platform | Vendor | Deployment | Key Capabilities | OSS | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| Alteryx Designer / Cloud | Alteryx | Desktop / Cloud | Visual drag-and-drop data prep, 80+ transform tools, predictive analytics, spatial analytics, AI-assisted wrangling, document parsing via Alteryx AI Platform | Partial (Community) | Market leader for business analysts; widest range of built-in connectors; AI-assisted suggestions; strong document processing | Per-seat licensing is expensive; cloud migration still maturing; heavy desktop client for advanced workflows |

| Dataiku DSS | Dataiku | On-prem / Cloud | End-to-end data science platform, visual recipes, Spark/SQL execution, collaborative notebooks, LLM recipe support for unstructured data | Partial (free tier) | Bridges data prep and ML in one platform; strong governance and collaboration features; good unstructured handling via LLM recipes | Broad scope can feel overwhelming; enterprise pricing is significant; full value requires team-wide adoption |

| Google Cloud Dataprep (Trifacta) | Google / Trifacta | Cloud (GCP) | ML-based anomaly detection, intelligent transform suggestions, visual wrangling, BigQuery integration, pipeline publishing | No | Excellent ML-driven suggestions; deep BigQuery/GCS integration; low operational overhead as managed service | Primarily structured/tabular focus; GCP-centric; less suitable outside Google ecosystem; acquired product with evolving roadmap |

| Microsoft Power Query / Dataflows | Microsoft | Cloud / Desktop | M language transforms, Power BI/Fabric integration, 1000+ connectors, AI column suggestions, incremental refresh, Dataflows Gen2 | No | Ubiquitous in Microsoft ecosystem; excellent accessibility for business analysts; Fabric Dataflows Gen2 adds enterprise scale | M language has a learning curve; performance constraints at very large volumes; best value inside Microsoft stack |

| Talend Data Preparation | Talend / Qlik | Cloud / On-prem | Collaborative wrangling, shared recipes, data quality rules integration, semantic discovery, profiling | No | Good governance integration within Talend suite; shared recipe library promotes team reuse; strong DQ integration | Qlik acquisition creates some roadmap uncertainty; less compelling outside the Talend suite; UI less modern than peers |

| OpenRefine | OSS (community) | Desktop / OSS | Free open-source wrangling, clustering algorithms, GREL expressions, Wikidata reconciliation, faceted browsing | Yes | Completely free; powerful clustering for dirty categorical data; widely used in journalism and research; active community | Not suited to enterprise scale or automation; desktop-only; no collaboration; limited structured pipeline integration |

| Ab Initio | Ab Initio Software | On-prem / Cloud | High-performance parallel data processing, graphical data prep flows, complex transformations, metadata management, enterprise-grade lineage | No | Exceptional throughput for very large batch volumes; deep metadata and lineage capabilities; strong in financial services | Very high licensing cost; steep learning curve; limited cloud-native deployment options; requires specialist skills |

| Snowflake (Snowpark / Worksheets) | Snowflake | Cloud (SaaS) | Snowpark Python/Java/Scala data prep inside the warehouse, DataFrame API, vectorised UDFs, notebook workflows, AI/ML functions | No | Eliminates data movement for prep; unified compute and storage; strong scalability; ML functions run in-warehouse | Requires Snowflake as the data platform; Python proficiency needed; limited visual/no-code interface for business users |

| Databricks AutoML / Feature Store | Databricks | Cloud | Automated feature engineering, feature reuse, MLflow integration, Unity Catalog governance, text feature support | Partial (MLflow OSS) | Tightly integrated prep for ML workflows; good for mixed structured and unstructured data; strong for teams building models | Primarily ML-oriented rather than general prep; requires Databricks platform; limited business-user tooling |

| SAS Data Management | SAS | On-prem / Cloud | Data prep, quality, profiling; deep statistical integration; SAS Viya cloud modernization; federation and virtualisation | No | Very strong in regulated industries; SAS Viya modernization underway; deep statistical and analytical integration | Legacy architecture and pricing model; cloud migration slower than competitors; high total cost of ownership |

| ABBYY Vantage | ABBYY | Cloud / On-prem | Document AI, intelligent document processing, OCR, field extraction, table recognition, unstructured to structured conversion | No | Leader in document prep; critical for invoice/contract/form processing at scale; high OCR accuracy; strong NLP field extraction | Primarily document-oriented; limited tabular data prep capability; integration effort required for data pipeline use |

| AWS Textract | AWS | Cloud (AWS) | ML-powered OCR, forms and table extraction, signature detection, queries API for targeted field extraction, S3 and Lambda integration | No | Highly accessible managed document prep; excellent AWS integration; pay-per-use pricing; strong API for pipeline automation | AWS-centric; limited business-user tooling; table extraction can struggle with complex layouts; cost scales with volume |

Modern data preparation tools increasingly need to serve two audiences: data engineers requiring scalable, automated transformation pipelines, and business analysts needing intuitive visual tools. The shift toward cloud-native in-warehouse preparation using Snowpark or Databricks is reducing reliance on standalone prep tools for technical users, but visual tools like Alteryx and Power Query retain strong adoption among non-engineers. Ab Initio fills a specific high-performance niche for organizations processing extremely large batch volumes where throughput is non-negotiable.

The most significant recent change is the formal inclusion of document preparation. ABBYY Vantage and AWS Textract now sit naturally alongside Alteryx and Dataiku in the preparation layer, converting contracts, invoices, and forms into structured datasets ready for analytics or AI training.

2.4.1.3 Data Integration

| Tool / Platform | Vendor | Deployment | Key Capabilities | OSS | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| MuleSoft Anypoint Platform | Salesforce | Cloud / On-prem | API-led connectivity, 500+ connectors, DataWeave transformation language, API management gateway, MQ messaging, Composer no-code option, Copilot AI for mapping | No | Gartner MQ leader; comprehensive API plus integration platform; very strong connector ecosystem; Einstein/Copilot AI accelerates integration development significantly | Premium pricing that makes it primarily enterprise territory; DataWeave learning curve; best value when full platform is adopted rather than used selectively |

| Azure API Management + Logic Apps | Microsoft | Cloud (Azure) | Enterprise API gateway, developer portal, OAuth2/OIDC security, policy engine, Logic Apps for event-driven workflow integration, Azure Functions for custom connectors, Event Grid for event routing | No | Comprehensive Azure-native API management plus integration; Event Grid enables event-driven data integration at scale; deep Microsoft ecosystem integration; strong RBAC and security policy engine | Azure-centric; cross-cloud API management less capable than MuleSoft; Logic Apps pricing can escalate; complex scenarios require Azure Functions custom code |

| AWS API Gateway + EventBridge | AWS | Cloud (AWS) | Managed REST/WebSocket/HTTP API gateway, Lambda integration, EventBridge event bus for application and SaaS event routing, Step Functions for workflow orchestration, 200+ SaaS event sources | No | Powerful serverless API and event-driven integration on AWS; EventBridge connects 200+ SaaS applications natively; strong for event-driven data integration architectures; pay-per-use model | AWS-centric; enterprise API management capabilities less mature than MuleSoft or Azure APIM; cross-cloud orchestration requires custom work |

| Informatica IDMC | Informatica | Cloud (SaaS) | Unified cloud data management platform, ETL/ELT, MDM, DQ, API services, CLAIRE AI engine, 500+ connectors, document processing pipelines | No | Broadest enterprise data integration platform; AI-assisted mapping via CLAIRE is genuinely impressive; premium pricing but genuinely comprehensive depth across ETL, API, MDM, and DQ | High cost; best value when adopting the full platform; complex deployment; API management capabilities less mature than MuleSoft |

| Dell Boomi AtomSphere | Boomi | Cloud (SaaS) | iPaaS, 1600+ connectors, MDM, API Management, Flow workflow engine, Boomi AI for mapping and integration suggestions, event-driven integration | No | Largest connector ecosystem in the iPaaS market; strong mid-to-large enterprise adoption; Boomi AI accelerates configuration time substantially; good balance of capability and usability | Less deep for API management than MuleSoft; AI capabilities still maturing; complex processes require professional services; pricing has increased significantly |

| Azure Data Factory / Fabric | Microsoft | Cloud (Azure) | Cloud ETL/ELT, 100+ connectors, SSIS migration pathway, data flows, pipeline monitoring, Mapping Data Flows, Fabric Data Factory integration, Copilot assistance | No | Strong Microsoft-ecosystem data integration; Fabric Data Factory is the strategic direction; mature monitoring and alerting; Copilot AI simplifies pipeline building for common patterns | Azure-centric; less comprehensive iPaaS than MuleSoft or Boomi; no native API management; complex transformation requires custom Spark code |

| AWS Glue + Step Functions | AWS | Cloud (AWS) | Serverless ETL (Glue Spark and Python Shell), Glue Data Quality, Glue Catalog, Step Functions workflow orchestration, Lambda for custom logic, event-driven triggers | No | AWS-native serverless integration; strong serverless model eliminates cluster management; Glue Data Quality adds inline quality checks; pay-per-use cost model | Custom code required for complex transformation logic; limited visual development experience; integration logic can become hard to govern without good practices |

| Talend Data Fabric | Talend / Qlik | Cloud / On-prem | Unified data integration, ETL, API, DQ, catalog, and governance in one platform; Qlik Analytics integration creating combined analytics and integration story | Partial (Talend Open Studio OSS) | Comprehensive platform; open-source edition available for evaluation; Qlik integration adds analytics context; good regulatory compliance documentation | Qlik acquisition creating some roadmap uncertainty; UI less modern than newer tools; cloud-native features building on older architecture |

| Workato | Workato | Cloud (SaaS) | Enterprise automation and integration, 1000+ connectors, low-code recipe builder, API platform, AI Copilot for recipe generation, real-time triggers | No | Fast-growing at the business automation and integration convergence; excellent user experience for non-engineers; AI Copilot for recipe generation is practical and saves significant time | Less deep for heavy data engineering integration than Informatica or Boomi; primarily business process integration focus; large data volumes can be costly |

| Airbyte + dbt (ELT stack) | Airbyte + dbt Labs (OSS) | OSS / Cloud | Open-source ELT: Airbyte for extraction and loading (300+ connectors), dbt for SQL transformation, Git-managed, community connector ecosystem | Yes (MIT / Apache 2.0) | Modern cost-effective OSS integration stack; 300+ source connectors in Airbyte; vibrant community; Git-native workflow; Airbyte Cloud adds managed service option | Less enterprise feature depth than Informatica or MuleSoft; custom connectors require engineering; data quality and governance require additional tooling beyond the base stack |

Enterprise data integration is converging with application integration and API management. Classical ETL tooling is being absorbed by ELT approaches for analytical use cases, while iPaaS platforms expand to cover data integration scenarios. AI-assisted connector configuration and field mapping is now a real differentiator: Boomi AI and CLAIRE in Informatica both reduce integration configuration time significantly for standard patterns. Event-driven integration patterns are growing alongside batch, reflecting the broader push toward real-time data operations.

2.4.1.4 Data Mastering (Master Data Management)

| Tool / Platform | Vendor | Deployment | Key Capabilities | OSS | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| Informatica MDM (IDMC) | Informatica | Cloud / On-prem | Customer/supplier/product MDM, hierarchy management, match-merge with survivorship rules, CLAIRE AI entity resolution, real-time MDM APIs | No | Gartner leader; comprehensive multi-domain MDM; CLAIRE ML matching strong and continuously improving; real-time APIs for operational MDM use cases; deepest feature set in market | High cost and implementation complexity; best value inside Informatica ecosystem; implementation projects require significant time and specialist expertise |

| Reltio Connected Data Platform | Reltio | Cloud (SaaS) | Cloud-native MDM, knowledge graph-based entity resolution, real-time APIs, Reltio AI, multi-domain support, continuous intelligence | No | Modern cloud-native challenger with ML-native matching; strong API-first architecture; knowledge graph approach handles complex relationships; growing enterprise adoption | Newer vendor building enterprise references; implementation effort still significant; deep customization can be complex; primarily strong in customer MDM |

| Stibo Systems STEP | Stibo Systems | On-prem / Cloud | Multi-domain MDM, product and supplier MDM, digital asset management, PIM combined with MDM, workflow automation, GDSN connectivity | No | Strong in product and supplier domains; comprehensive PIM plus MDM is unique; large enterprise focus; GDSN for retail supply chain is a differentiator | UI less modern than cloud-native peers; primarily product data focus; implementation projects lengthy; less strong in customer MDM compared to Informatica |

| EnterWorks (Syndigo) | Syndigo (EnterWorks) | Cloud (SaaS) | Product information management and MDM, content syndication, digital asset management, channel-specific data publishing, retailer and distributor connectivity | No | Strong product MDM with content syndication; excellent for consumer goods and retail where channel-specific product data distribution is critical; Syndigo network connects to retailers directly | Primarily product MDM and PIM; customer or supplier MDM less capable; primarily consumer goods and retail vertical focus |

| GoldenSource | GoldenSource | Cloud / On-prem | Financial instrument master data, security reference data management, corporate actions, entity (LEI) management, regulatory reporting integration, real-time data distribution | No | Specialist in financial instrument and security reference data; deep capital markets domain knowledge; strong regulatory data management for MiFID II, EMIR, FRTB; proven at global banks | Financial services specialist; not suitable as general-purpose enterprise MDM; high implementation cost; primarily tier-one financial institution focus |

| Gresham Alveo | Gresham Technologies | Cloud / On-prem | Financial data management platform, reference data, pricing, corporate actions, static data governance, data distribution to downstream systems, data quality controls | No | Comprehensive financial data management for capital markets; strong data distribution and feed management capability; good reference data governance; proven in buy-side and sell-side | Financial services specialist; not a general-purpose MDM platform; Gresham primarily known for reconciliation; Alveo market presence building |

| EDM (Gresham) | Gresham Technologies | Cloud / On-prem | Enterprise data management for financial services, instrument data, pricing, valuations, entity data, regulatory data management, data quality and lineage | No | Comprehensive financial EDM from a market data leader; strong instrument data and pricing management; well-established tier-one bank deployments | Gresham strategic direction post acquisition from S&P still clarifying; primarily financial services; high implementation and licensing cost |

| SAP Master Data Governance | SAP | On-prem / Cloud (Rise) | ERP-native MDM, governance workflows, S/4HANA consolidation, Finance/Business Partner/Material domains, central governance hub | No | Essential for SAP-centric enterprises; very deep S/4HANA alignment; governance workflows tightly integrated with ERP processes; Finance and Business Partner domains are very strong | Limited value outside SAP ecosystem; less flexible for non-SAP data; cloud deployment still maturing; tightly coupled to SAP release cycle |

| Semarchy xDM | Semarchy | Cloud / On-prem | Agile multi-domain MDM, graphical data model design, low-code application development, embedded DQ, intelligent matching and merge | No | Strong model-driven agile delivery; good for organizations wanting faster MDM implementation than legacy platforms; growing mid-market adoption; reasonable total cost of ownership | Smaller vendor with more limited global implementation partner network; enterprise-scale references building; less deep for very complex financial or product hierarchies |

| Ataccama ONE (MDM) | Ataccama | Cloud / On-prem | AI-powered MDM, automated profiling, ML match-merge, unified DQ and MDM platform, self-service stewardship workflows, European deployment options | No | Unique DQ plus MDM combination reduces platform count; strong AI-first approach with active learning; European vendor with good EU data residency options; growing enterprise adoption | Less known than Informatica or IBM in large enterprise; full value requires MDM and DQ adoption together; financial services domain depth less established |

| Tamr | Tamr | Cloud (SaaS) | ML-powered entity resolution at scale, customer and supplier MDM, active learning from stewardship feedback, Snowflake and Databricks native integration | No | Modern ML-native approach with genuinely fast implementation versus legacy MDM; active learning improves matching with every stewardship decision; strong for complex matching scenarios | Newer vendor; governance workflow depth building; best for matching-intensive use cases; less comprehensive for hierarchy management and multi-domain governance than Informatica |

Modern MDM requirements have evolved beyond batch match-merge operations. Real-time entity resolution APIs are now required for customer experience use cases where identity must be resolved at the point of interaction in milliseconds. ML-based probabilistic matching with active learning, where the system improves with each stewardship decision, is replacing static rule-based matching for most organizations.

Financial services MDM deserves separate consideration. GoldenSource, Gresham Alveo, and Gresham EDM are specialist platforms for financial instrument reference data, corporate actions, and entity hierarchies, serving requirements that general-purpose enterprise MDM platforms cannot address.

2.4.1.5 Document Management

| Tool / Platform | Vendor | Deployment | Key Capabilities | OSS | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| Microsoft SharePoint / Syntex | Microsoft | Cloud (Microsoft 365) | Document management, content types, automated classification via Syntex AI, compliance labels, Power Automate integration, SharePoint Premium AI features, Copilot over documents | No | Dominant enterprise document management; Syntex AI adds automated classification and metadata extraction; Microsoft 365 Copilot over documents is powerful; deep compliance integration | Primarily within Microsoft ecosystem; governance complexity at very large scale; SharePoint Premium pricing adds up; search quality across large tenants requires tuning |

| OpenText Content Suite / Documentum | OpenText | On-prem / Cloud | Enterprise content management, records management, archiving, document lifecycle workflows, compliance, OpenText Intelligent Capture for document ingestion | No | Long-established ECM; very strong in regulated industries (pharma, legal, financial); mature records management and compliance capabilities; broad deployment across large enterprises | Legacy architecture limiting agility; modernization to cloud is slower than Microsoft; complex licensing; less compelling for new deployments versus modern cloud-native alternatives |

| Box | Box | Cloud (SaaS) | Cloud content management, Box AI for classification and content extraction and Q&A over documents, metadata templates, Box Sign, secure collaboration, developer APIs | No | Strong enterprise cloud content platform; Box AI adds classification, extraction, and document Q&A natively; excellent API for integration; security and compliance certifications comprehensive | Collaboration focus rather than deep governance; metadata model less powerful than SharePoint for complex content types; ECM workflow depth less than OpenText |

| Data Dynamics Zubin | Data Dynamics | Cloud / On-prem | Unstructured data management platform, NAS/S3/SharePoint/file server content management, metadata extraction, retention automation, storage tiering, GDPR and HIPAA compliance for documents | No | Comprehensive unstructured data lifecycle management combining governance, compliance, cost optimization, and content search; strong for organizations with large NAS and file server estates | Primarily unstructured data focus; structured database governance is not the strength; less well known than SharePoint or OpenText in ECM market |

| Alfresco (Hyland) | Hyland | Cloud / On-prem | Open-source ECM, document workflows, records management, enterprise search, process automation, API-first integration | Yes (Community Edition) | Strong open-source ECM heritage; Hyland acquisition brings enterprise support; good process workflow automation; API-first design for data pipeline integration; flexible deployment | Community edition limited vs. enterprise; smaller market than SharePoint or OpenText; Hyland portfolio complexity post-acquisitions |

| M-Files | M-Files | Cloud / On-prem | Metadata-driven document management, AI-based automatic classification, version control, workflow automation, vault-based access control, Teams and Salesforce integration | No | Unique metadata-centric approach where documents are found by what they are rather than where they are stored; strong AI classification; good, regulated industry support | Smaller market presence; metadata model requires investment to design and maintain; less known outside Nordics and professional services markets |

| ABBYY Vantage | ABBYY | Cloud / On-prem | Intelligent document processing, OCR, form and table extraction, NLP-based field recognition, low-code skill builder, API-first integration, human-in-the-loop review | No | Market leader for automated document extraction and processing; IDP platform converts documents to structured data; high OCR accuracy on complex layouts; API-first for pipeline integration | Primarily document extraction rather than content storage and lifecycle management; integration effort required for ECM workflows; skilled builder needed for complex document types |

| Coveo | Coveo | Cloud (SaaS) | AI-powered enterprise search across SharePoint/Confluence/Salesforce/web/email, relevance tuning, behavioral analytics, semantic search, customer-facing search integration | No | Best unified search across heterogeneous document repositories; AI relevance model improves continuously with usage; good for customer-facing and employee-facing search use cases | Primarily a search layer, not a document lifecycle management platform; governance capabilities limited; pricing significant for large enterprises |

Document management has experienced a step-change transformation with the embedding of AI capabilities. The traditional distinction between ECM platforms focused on storage and lifecycle management and AI platforms focused on content extraction is dissolving: modern ECM vendors (Microsoft Syntex, Box AI, M-Files) now offer intelligent classification, automated metadata generation, and document Q&A.

For most enterprises, the document management stack has two layers: a storage and governance layer (SharePoint, Box, or OpenText for lifecycle management and compliance) and an AI processing layer (ABBYY, AWS Textract, or Azure Document Intelligence for converting document content into structured pipeline-ready data). Organizations should evaluate both layers and ensure they are connected, as the value of AI document extraction is fully realized only when the extracted structured data flows into governed analytical stores and AI training pipelines.

2.4.2 Data Catalog and Marketplace

The data catalog and marketplace layer covers three closely related capabilities: the central metadata repository for all data assets (catalog), the tracking of data movement and transformation (lineage), and the shared business vocabulary that aligns technical metadata with business meaning (business glossary). Together these form the foundation of the governed, discoverable data estate. Catalog marketplace features enable internal and external data product publication and consumption.

2.4.2.1 Data Catalog

| Tool / Platform | Vendor | Deployment | Key Capabilities | OSS | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| Collibra Data Intelligence Cloud | Collibra | Cloud / On-prem | Policy-driven catalog, automated classification, lineage, business glossary, data marketplace, document assets via DeasyLabs; DCAT metadata export supported | No | Most comprehensive enterprise catalog; gold standard governance workflows; strong unstructured coverage via DeasyLabs and BigID integrations | High implementation effort and cost; requires dedicated stewardship team; complex for smaller organizations |

| Alation Data Catalog | Alation | Cloud / On-prem | Behavioral ML auto-documentation, curation campaigns, stewardship dashboards, file system scanning, governance workflows; REST API for DCAT alignment | No | Strong behavioral analytics surface curation priorities; trusted enterprise catalog with proven ROI; extending toward unstructured asset types | DCAT export requires custom integration; unstructured coverage still maturing; implementation effort significant |

| Atlan | Atlan | Cloud (SaaS) | Modern developer-plus-analyst catalog, embedded lineage (300+ sources), policy management, AI metadata agents, custom asset types for documents and models; OpenMetadata standards | No | Fastest-growing modern catalog; API-first; excellent UX; custom asset types well-suited to non-tabular data; strong OpenMetadata standards alignment | Newer vendor; enterprise breadth building; governance workflow depth developing compared to Collibra |

| DataHub | Acryl Data / OSS | OSS / Cloud | Extensible metadata graph, configurable entities, push/pull ingestion, column-level lineage, custom entities for documents and ML models; DCAT mapping via custom ingestion | Yes (Apache 2.0) | Best OSS catalog; highly extensible architecture; custom entity model uniquely suited to non-tabular assets; strong community | Requires engineering resource for OSS operation; UI less polished than commercial tools; DCAT support requires custom work |

| OpenMetadata | OpenMetadata (OSS) | OSS / Cloud | Unified metadata platform, 80+ connectors, data quality integration, collaboration, schema versioning, REST APIs; DCAT-compatible metadata model | Yes (Apache 2.0) | Strong open-source alternative; active community adding connectors; DCAT-compatible design from the outset; good governance features | Smaller ecosystem than DataHub; production deployments require engineering investment; commercial support limited |

| Snowflake Horizon Catalog | Snowflake | Cloud (SaaS) | Native catalog for Snowflake objects, automated tagging, sensitivity classification, governance policies, access history, data quality rules, cross-cloud metadata; DCAT metadata exportable | No | Zero-friction for Snowflake users; unified catalog and governance in one platform; strong classification and policy enforcement natively | Snowflake-only scope; external source coverage limited without additional tooling; less suitable as enterprise-wide catalog |

| Databricks Unity Catalog | Databricks | Cloud (SaaS) | Unified catalog for tables, models, notebooks, and files in Delta Lake; column-level lineage, fine-grained access control, AI/BI governance; Delta Sharing for external catalog exchange | No | Excellent for Databricks-centric data estates; covers structured and ML assets in one place; strong lineage for Delta pipelines | Databricks-centric; multi-cloud catalog consolidation complex; limited business user tooling compared to Collibra or Atlan |

| Microsoft Purview | Microsoft | Cloud (Azure / M365) | Automated data map, sensitivity labels, DLP integration, Teams/M365 lineage, SharePoint and Exchange cataloging; DCAT-inspired taxonomy model | No | Best catalog for unstructured and semi-structured Microsoft content; unique M365 coverage; expanding structured DW coverage rapidly | Azure/M365 ecosystem dependency; DCAT compliance limited; governance workflows less mature than dedicated catalog vendors |

| Google Dataplex | Cloud (GCP) | Unified data management across BigQuery, GCS, and Bigtable; automated tagging, data quality, lineage, GCS object cataloging; BigQuery Data Catalog integration; DCAT-based APIs | No | Native GCP integration; GCS object coverage brings unstructured files into catalog; DCAT-based API design; strong BigQuery lineage | GCP-centric; limited outside Google Cloud; governance depth less than specialist catalog tools | |

| Informatica Enterprise Data Catalog | Informatica | Cloud / On-prem | AI-powered catalog (CLAIRE), automated scanning of 300+ sources including file systems and cloud storage, S3 and NAS coverage; DCAT metadata export available | No | Deep Informatica suite integration; CLAIRE AI provides impressive, automated enrichment; broad source coverage including file systems | Best value inside Informatica ecosystem; standalone adoption less compelling; complex deployment |

| IBM Knowledge Catalog | IBM | Cloud (IBM Cloud) | Automated metadata enrichment, data classes, business terms, Watson AI governance, Cloud Pak for Data integration; DCAT-aligned metadata model | No | Strong Watson AI enrichment; good compliance mapping; IBM Cloud-native deployment; DCAT alignment in metadata model | IBM Cloud-centric; limited adoption outside IBM ecosystem; complex setup; pricing opacity |

| Data Dynamics Zubin | Data Dynamics | Cloud / On-prem | Unstructured data catalog and governance across NAS, S3, SharePoint, file servers; content classification, metadata extraction, GDPR inventory, retention management | No | Strong unstructured data catalog; storage cost and compliance optimization alongside cataloging; good for file-heavy organizations | Primarily unstructured focus; structured database catalog capability limited; less known than BigID |

| BigID | BigID | Cloud (SaaS) | Cataloging across 500+ structured and unstructured source types, PII inventory, sensitivity classification, S3/SharePoint/NAS/email cataloging, data risk scoring | No | Widest source coverage for unstructured cataloging; identifies sensitive data anywhere in the estate; proven at enterprise scale | Primarily security and privacy-focused rather than analytics catalog; lineage and business glossary less mature |

| erwin Data Intelligence | erwin (Quest) | On-prem / Cloud | Metadata management, lineage, business glossary, data literacy, process modelling, DCAT export support for open data publishing | No | Strong in regulated industries; deep data modelling heritage; DCAT export for open data use cases; good compliance documentation | Modernizing slowly to cloud; less competitive UX compared to modern catalogs; smaller community |

| Securiti.ai Data Catalog | Securiti | Cloud (SaaS) | Automated data discovery and classification across structured and unstructured sources, AI-powered PII and sensitive data cataloging, privacy context layered on catalog assets, cross-cloud scanning (AWS, Azure, GCP), data inventory for GDPR and CCPA compliance, catalog integrated with consent and DSAR workflows | No | Unique in combining catalog with privacy intelligence natively; auto-classification of sensitive data across 500+ source types means catalog entries arrive with privacy context already attached; strong for organizations where compliance is the primary driver for cataloging; cross-cloud coverage is broad | Catalog depth is secondary to the privacy and compliance mission; business glossary, stewardship workflows, and data lineage are less developed than Collibra or Atlan; not the right primary catalog for organizations whose main need is analytics governance rather than privacy compliance; best treated as a specialist privacy catalog rather than a general-purpose enterprise catalog |

| Ataccama ONE Catalog | Ataccama | On-prem / Cloud | Automated data discovery and profiling, AI-powered metadata classification, business glossary, data quality scoring surfaced in catalog, MDM integration, data lineage, role-based access, European data residency options | No | Strong combination of catalog and data quality in a single platform; DQ scores are natively embedded in catalog asset views so consumers can see fitness-for-purpose before using data; MDM integration means mastered entities are cataloged with quality context; good option for regulated industries requiring EU data residency | Less well known than Collibra or Alation in the catalog market; primarily gains traction where DQ and MDM are also in scope rather than as a standalone catalog purchase; UI and developer experience less modern than Atlan; smaller partner ecosystem and community than the market leaders |

Enterprise data catalogs are evaluated on five dimensions: automated metadata harvesting with minimal manual curation overhead; column-level lineage across heterogeneous systems; AI-powered enrichment and search; collaborative governance workflows; and openness through APIs and standards such as DCAT. A sixth dimension is becoming critical: unstructured asset coverage. Organizations that govern only structured data are leaving the majority of their data estate ungoverned.

Most enterprises will combine two or three catalog tools: a comprehensive governance platform, a modern developer-first catalog, and a specialist unstructured data catalog.

2.4.2.2 Data Lineage

| Tool / Platform | Vendor | Deployment | Key Capabilities | OSS / OpenLineage | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| Collibra Lineage (incl. IBM Manta) | Collibra | Cloud / On-prem | Automated lineage across 60+ systems, column-level, data flow visualization, impact analysis, regulatory reports; deep SQL parsing via IBM Manta licensing | No / OpenLineage connector | Most comprehensive enterprise lineage; IBM Manta licensing added industry-leading SQL parsing; document flow tracking via Manta parser | High cost and implementation complexity; Manta integration still maturing post-licensing; resource-intensive scanning |

| IBM Manta | IBM (acquired Manta) | On-prem / Cloud | Deep SQL parsing for 30+ platforms, stored procedures, ETL tool analysis, cross-system lineage, BI report lineage, document flow modelling | No / OpenLineage output | Most accurate SQL-parsing lineage in market; acquired by IBM then licensed to Collibra; strong BI layer coverage; document pipeline lineage capability | Post-acquisition positioning unclear; requires Collibra or IBM platform; complex to deploy standalone |

| Alation Lineage | Alation | Cloud / On-prem | Query-based lineage mining, behavioral intelligence, column-level, impact analysis, integrated catalog; OpenLineage event ingestion | No / OpenLineage supported | Accurate lineage through query mining rather than parsing; well-integrated with Alation catalog; OpenLineage events supported for pipeline lineage | Limited lineage outside SQL workloads; stored procedure and ETL parsing less deep than IBM Manta |

| Atlan Lineage | Atlan | Cloud (SaaS) | Automated lineage from 300+ sources, column-level, OpenLineage native, impact analysis, data product lineage, custom entity lineage | No / OpenLineage native | Modern approach with OpenLineage native integration; excellent visualization; growing asset type coverage including non-tabular; fast connector growth | Newer vendor; lineage depth for complex SQL stored procedures still maturing compared to IBM Manta or Informatica |

| DataHub Lineage | Acryl / OSS | OSS / Cloud | Push/pull lineage, column-level, OpenLineage integration, transformation node details, custom entity lineage for documents and models | Yes / OpenLineage native | Best OSS lineage; extensible custom entities allow lineage for document and model pipelines; OpenLineage native; active community | Requires engineering resource for production operation; UI less polished than commercial tools; RBAC governance less mature |